Generic Webhook — Engineering

Developers building or maintaining the Delivery Service and Configuration API.

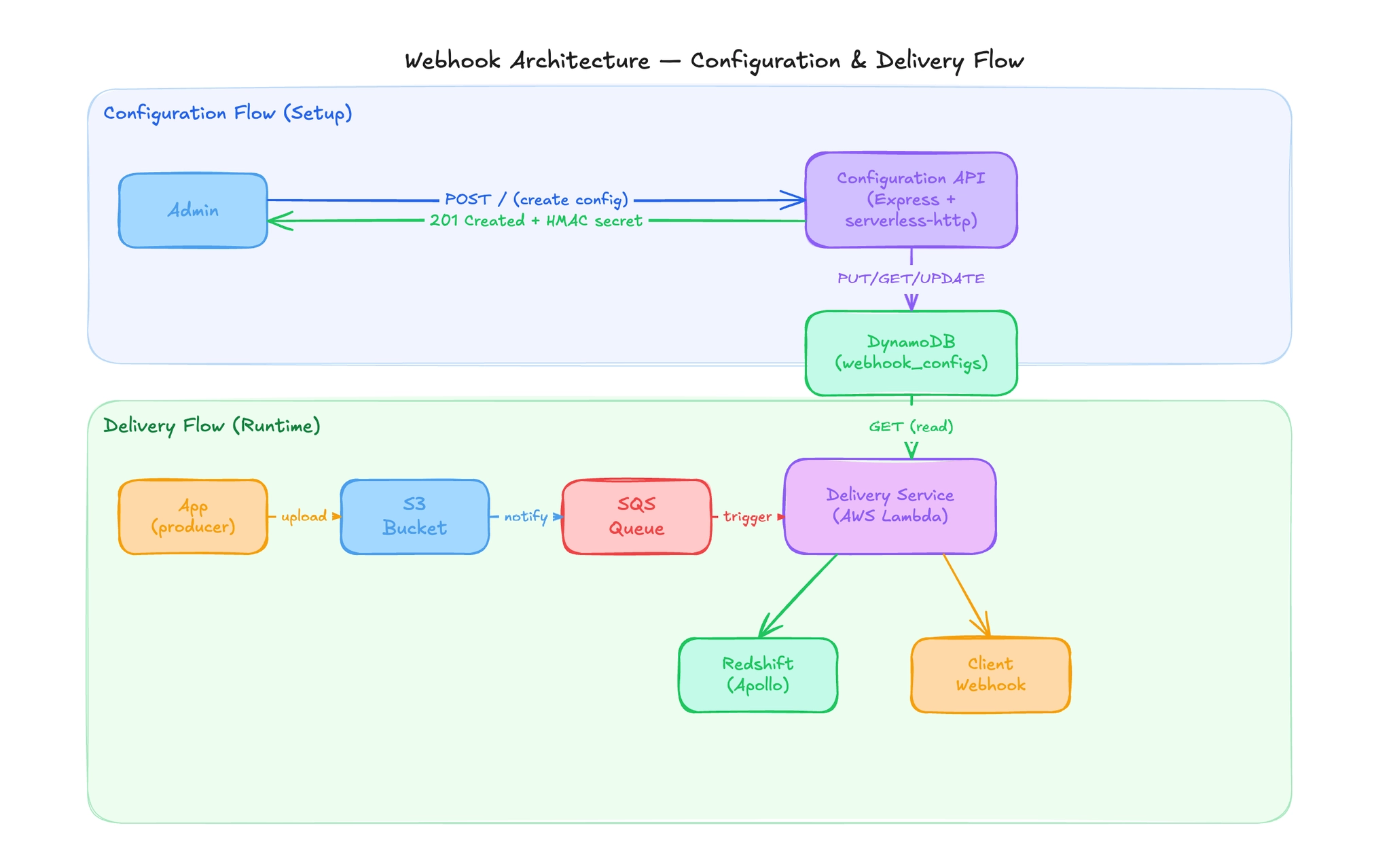

System architecture

Two separate services share one DynamoDB table:

Configuration API (ind/generic-webhook)

- Express.js app wrapped in

serverless-httpfor Lambda deployment - CRUD endpoints for webhook configs stored in DynamoDB

- JWT (RS256) or appId/appKey authentication with IP whitelisting

Delivery Service (global/generic-webhook)

- Raw AWS Lambda triggered by SQS

- Bucket-agnostic — reads from the S3 bucket S3 notifies via SQS

- Reads configs from DynamoDB, signs payloads, delivers to client URLs

- Logs delivery metrics to Redshift via Apollo service

Codebase structure

Delivery Service (global/generic-webhook)

├── index.js # Lambda handler + all core business logic

├── utils.js # Redshift log payload builders

├── package.json # aws-sdk, axios, fast-json-stable-stringify, lodash

├── .gitlab-ci.yml # CI/CD - deploys to ap-south-1, REPLICATE=TRUE

├── test-bugs.js # Bug regression tests (run with: node test-bugs.js)

└── database/

├── knexfile.js # Redshift connection config (client: "redshift", port: 5439)

├── package.json

└── migrations/

├── 20240131070646_addGenericWebhookTable.js

├── 20241204022244_addProcessingTimeFields.js

└── 20241204022715_addGenericWebhookFailureTable.js

Configuration API (ind/generic-webhook)

├── index.js # Express app entry point + route definitions

├── controller.js # Route handlers (storeConfig, getConfigs, updateConfig)

├── middlewares.js # IP whitelist + auth middleware

├── utils.js # generateSecret, getPartiallyMaskedString

├── package.json # express, serverless-http, joi, aws-sdk, kubera-logger

├── .gitlab-ci.yml # CI/CD - PROJECT=generic-webhook-api

└── utils/

├── dynamoDb.js # storeData, getData, updateData

└── inputValidator.js # Joi schemas + validate middleware

Delivery Service

Batch Processing

- AWS Lambda entry point for SQS events

- Processes messages in parallel

- Supports partial batch failure handling using SQS retry mechanisms

Per-Message Flow

For each incoming message:

- Extracts S3 bucket and file key from the message

- Fetches webhook payload (

webhook.json) from S3 - Retrieves configuration from DynamoDB using identifiers

- Skips processing if the configuration is inactive

- Optionally removes identifiers from payload based on config flags

- Generates an HMAC signature if a secret is configured

- Determines target webhook URLs using routing/workflow logic

- Sends the webhook request

Webhook Delivery

- Sends requests to multiple URLs in parallel

- Uses isolated HTTP clients per configuration (for SSL customization)

- Logs delivery lifecycle events:

- Before sending (pre-delivery)

- After success (post-delivery)

- On failure (error logging)

- Failures may trigger retries unless explicitly ignored for certain cases

Configuration Fetching

- Retrieves configuration using a composite key

- Returns full configuration or null if not found

HMAC Signature Generation

- Uses deterministic JSON serialization (sorted keys)

- Signature format:

transactionId + "_" + serializedPayload - Uses SHA256 and returns a hex digest

Logging / Telemetry

- Sends delivery logs to a telemetry service (for Redshift ingestion)

- Includes timing and delay metrics

- Logging is non-blocking and does not interrupt delivery flow

Utility Logic (Delivery Side)

Logging Payload Builder

- Constructs structured logs for:

- Pre-delivery (processing timestamps and delays)

- Post-delivery (delivery timestamps and delays)

Error Logging

- Extracts HTTP status and error message from failed requests

- Formats structured failure logs

Array Validation

- Utility to check if a value is a non-empty array

- Used for validating workflow routing inputs

Configuration API

Create Configuration

- Stores webhook configuration in DynamoDB

- Can generate a secret if requested

- Logs masked versions of sensitive fields

Fetch Configuration

- Retrieves configuration from DynamoDB

- Secret is excluded unless explicitly requested

Update Configuration

- Dynamically updates only provided fields

- Primary keys remain immutable

- Supports secret rotation

Middleware

IP Whitelisting

- Restricts access based on allowed IPs

- Supports wildcard configuration

- Rejects requests if validation fails

Authentication

Supports two methods:

- JWT-based

- Verifies token using a public certificate

- Extracts identifier from token

- Credential-based

- Validates identifier + key via external service

- Uses in-memory caching (15-minute TTL)

Input Validation

- Uses schema-based validation for incoming requests

- Rejects invalid input with appropriate error responses

Supported Inputs

- Required identifiers and configuration fields

- Optional flags for:

- Headers

- Secret updates

- Workflow routing

- Payload modification

DynamoDB Operations

Database schemas

Redshift - generic_webhook (success logging)

One row per successful delivery.

CREATE TABLE generic_webhook (

id BIGINT IDENTITY(1,1) PRIMARY KEY,

app_id VARCHAR(40),

transaction_id VARCHAR(256),

event_id VARCHAR(256),

event_type VARCHAR(256),

hv_event_name VARCHAR(100),

env VARCHAR(40),

additional_properties VARCHAR(65535),

webhook_delivary_delay INTEGER, -- ⚠️ typo in column name - do not rename

event_start_timestamp TIMESTAMP,

webhook_delivery_timestamp TIMESTAMP,

original_timestamp TIMESTAMP,

webhook_processing_timestamp TIMESTAMP,

webhook_processing_delay INTEGER

);

Redshift - generic_webhook_failure (failure logging)

One row per failed delivery attempt.

CREATE TABLE generic_webhook_failure (

id BIGINT IDENTITY(1,1) PRIMARY KEY,

app_id VARCHAR(40),

transaction_id VARCHAR(256),

status_code VARCHAR(40),

error_message VARCHAR(256),

event_type VARCHAR(256),

hv_event_name VARCHAR(100),

env VARCHAR(40),

additional_properties VARCHAR(65535),

original_timestamp TIMESTAMP

);

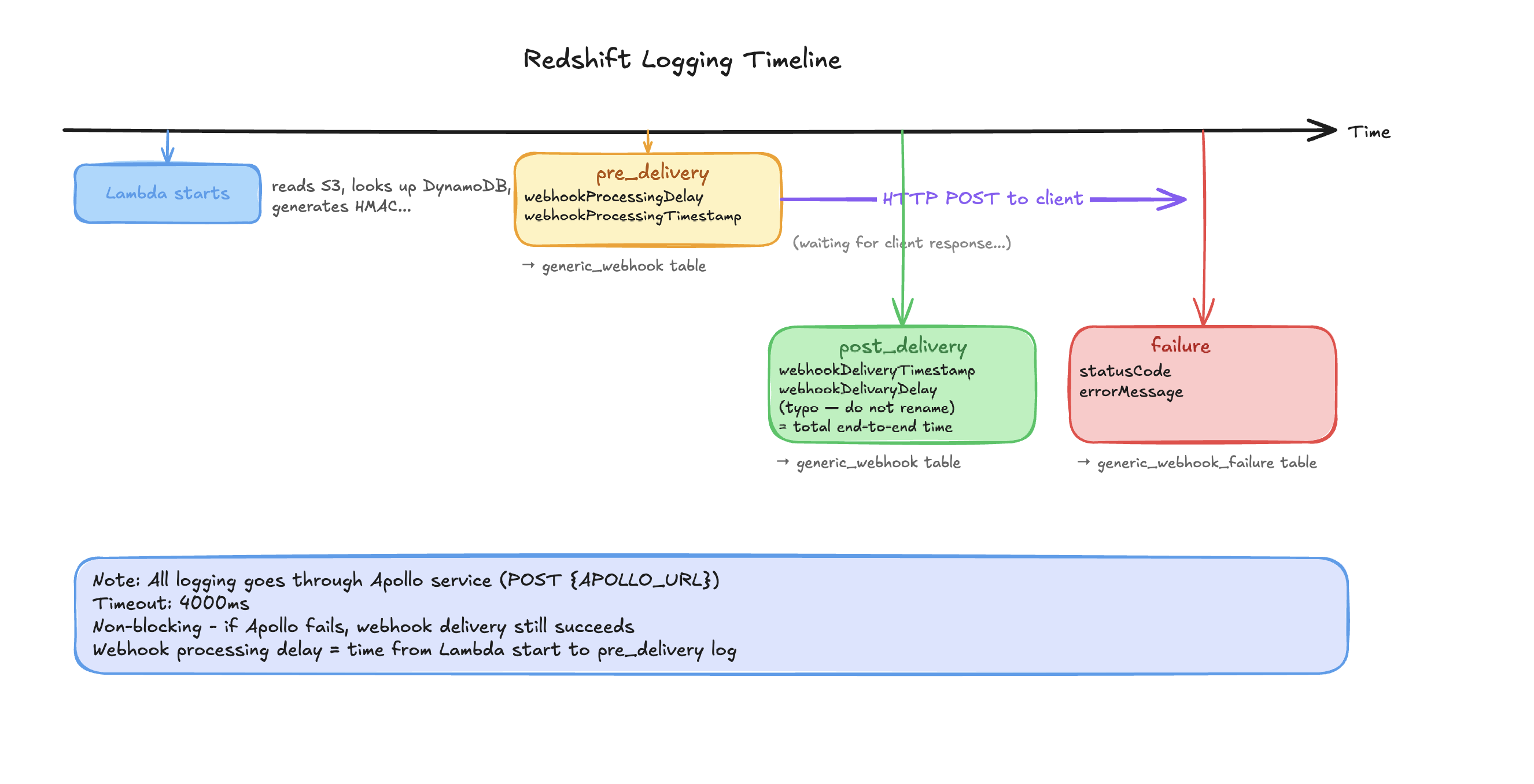

Redshift logging via Apollo

The Delivery Service logs to Redshift by POSTing to the Apollo service:

POST {APOLLO_URL}

Headers:

appid: {APPID}

appkey: {APPKEY}

Body:

{

"project": "{PROJECTNAME or FAILURE_PROJECTNAME}",

"properties": {

...payload,

"env": "{REDSHIFTENV}"

}

}

Three log event types:

pre_delivery— Logged before webhook POST. IncludeswebhookProcessingTimestampandwebhookProcessingDelay(time from Lambda start to delivery).post_delivery— Logged after successful POST. IncludeswebhookDeliveryTimestampandwebhookDelivaryDelay(⚠️ typo — do not rename).failure— Logged on delivery error. IncludesstatusCodeanderrorMessage. Written toFAILURE_PROJECTNAMEtable.

Logging is non-blocking. If Apollo fails, delivery still succeeds.

Database migrations

Migration files are in database/migrations/ in the Delivery Service repo. Uses Knex.js with redshift client, port 5439. Migration tracking table: generic_webhook_knex_migrations.

| Migration file | Date | What it does |

|---|---|---|

20240131070646_addGenericWebhookTable.js | 2024-01-31 | Creates generic_webhook table |

20241204022244_addProcessingTimeFields.js | 2024-12-04 | Adds webhook_processing_timestamp and webhook_processing_delay columns |

20241204022715_addGenericWebhookFailureTable.js | 2024-12-04 | Creates generic_webhook_failure table |

cd database

npm install

# Set: DB_HOST, DB_PORT, DB_USER, DB_PASSWORD, DB_NAME

npx knex migrate:latest # run all pending migrations

npx knex migrate:rollback # undo the last migration batch

Integrations

Audit Portal (gitlab.com/hvlabs/kyc/audit-portal)

- S3 bucket:

prod-generic-webhook-ind - S3 path:

audit-portal/<appId>/<YYYY-MM-DD>/<eventId>/<eventType>/webhook.json- In

WEBHOOK_ENV=testing:transactionIdreplaceseventIdin path

- In

- Auto-calls Config API: Yes — on every config create/update using

INTERNAL_APPID/INTERNAL_APPKEY - Event types:

FINISH_TRANSACTION_WEBHOOK,INTERMEDIATE_TRANSACTION_WEBHOOK,MANUAL_REVIEW_STATUS_UPDATE,APPLICATION_STATE_RESET - Event gating:

eventsarray in audit-portalconfigstable — only listed event types fire newFlowflag:true(default) = S3 generic webhook flow.false= deprecated direct send. All configs must havenewFlow: true.webhookPayloadBlacklistedKeys: Defaults to["workflowId"]. Keys stripped from payload before S3 upload.additionalMetadataKeys: Set to["workflowId"]to include workflowId in S3 metadata for workflow routing.DISABLE_WEBHOOK_DELIVERY=yes: Disables all delivery in audit-portal without code changes.- SQS consumer: Consumes

dev-audit-portal-finish-transaction.kyc_in_progress→INTERMEDIATE_TRANSACTION_WEBHOOK, any other terminal →FINISH_TRANSACTION_WEBHOOK. - Workflow routing support:

FINISH_TRANSACTION_WEBHOOKandMANUAL_REVIEW_STATUS_UPDATE(generic webhook itself is event-type agnostic)

Link-KYC (gitlab.com/hvlabs/kyc/global-dkyc)

- S3 bucket:

hv-generic-webhook(env:GENERIC_WEBHOOK_S3_BUCKET) - S3 path:

link-kyc/<appId>/<YYYY-MM-DD>/<eventId>/<eventType>/webhook.json - Does NOT call Config API — configs must be registered manually via generic webhook Config API

- Config source: Portal API (

POST {PORTAL_BASE_URL}/api/v2/internal/configs/list), polled every 15 minutes byportalConfigFetcher - Fields from portal:

enableTamperCheck,events,webhookPayloadBlacklistedKeys,additionalMetadataKeys - Defaults if fetch fails:

startTransactionWebhookEnabled: true,webhookPayloadBlacklistedKeys: ['workflowId'],additionalMetadataKeys: [] - Event types:

START_TRANSACTION_WEBHOOK— fires onPOST /v1/link-kyc/start. Gated bystartTransactionWebhookEnabled. RespectswebhookPayloadBlacklistedKeysandadditionalMetadataKeys.EXPIRED_LINK_USED— fires unconditionally whencurrentTime >= expiryTimeinfetchKycAuthDetails(). No config gate.

- No kill switch — no equivalent of

DISABLE_WEBHOOK_DELIVERY

SSL configuration

- All webhook deliveries set

SSL_OP_LEGACY_SERVER_CONNECTon the HTTPS agent (not just bypass list) — supports older servers using legacy SSL renegotiation - A new axios instance is created per

sendWebhookcall — ensures per-appId SSL config isolation APP_IDS_TO_IGNORE_SSL_VERLIFICATION(note typo): for listed appIds,rejectUnauthorized: falseis set — use only for dev/staging

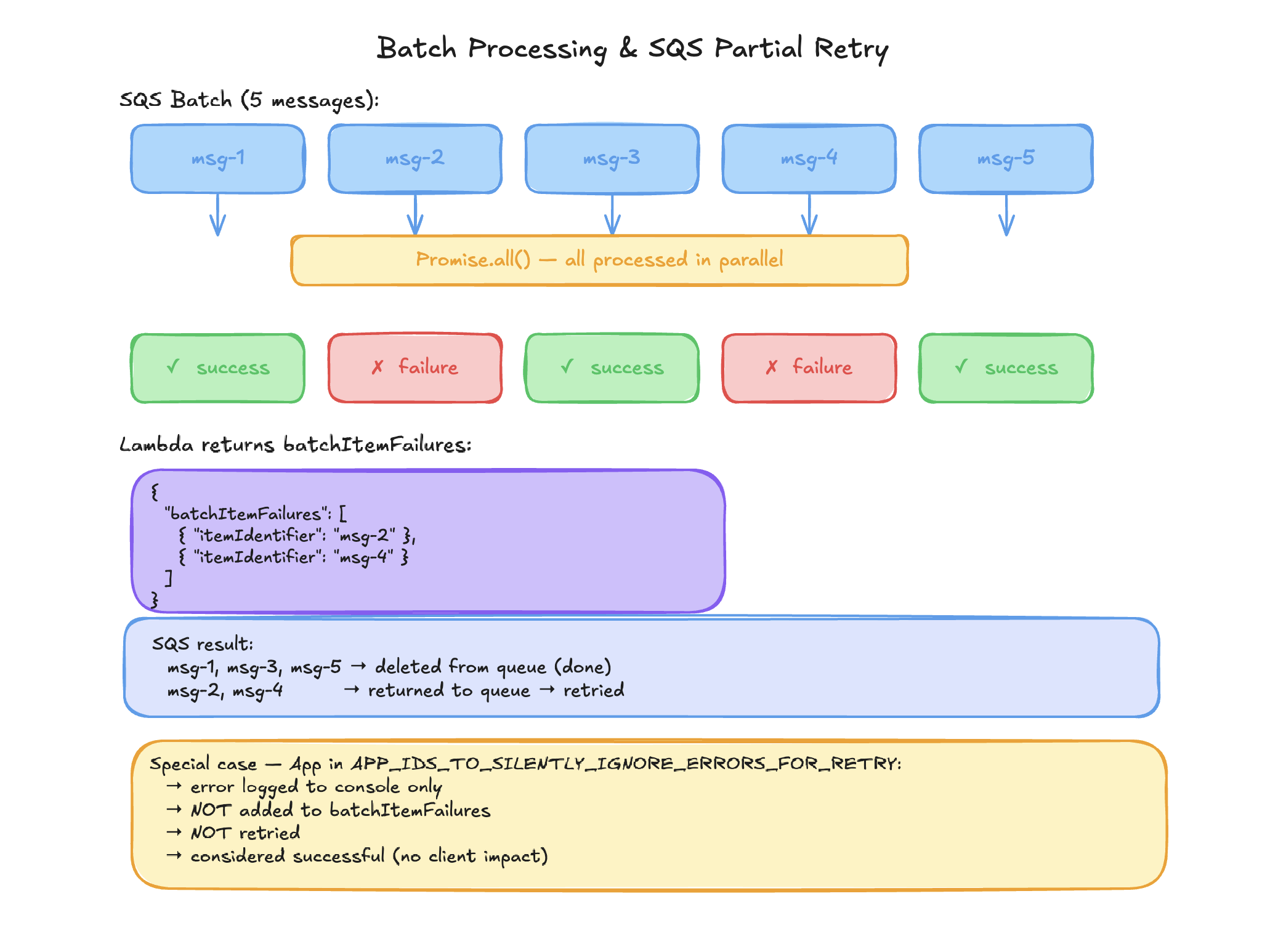

Batch processing

- Lambda receives SQS batch of records

- All records processed in parallel via

Promise.all() - Failed records have

messageIdadded tobatchItemFailures - SQS retries only the failed records (partial batch retry —

ReportBatchItemFailures) - Records for appIds in

APP_IDS_TO_SILENTLY_IGNORE_ERRORS_FOR_RETRYare NOT retried

Installation

Delivery Service:

cd /path/to/global/generic-webhook

npm install

# No local server - Lambda function. Test by invoking handler() with a mock SQS event.

Configuration API:

cd /path/to/ind/generic-webhook

npm install

# Express app wrapped in serverless-http. Run Express app directly for local dev.

Database migrations:

cd database

npm install

# Set: DB_HOST, DB_PORT, DB_USER, DB_PASSWORD, DB_NAME

npx knex migrate:latest

Release process

To be done.

Alert configurations

| Alert | Trigger |

|---|---|

generic-webhook-high-error-rate | CloudWatch alarm on Lambda invocation errors |

generic-webhook-throttles | CloudWatch alarm on Lambda throttling |

Grafana MISCELLANEOUS dashboard | Delivery metrics |

Environment variables

Delivery Service (global/generic-webhook)

| Variable | Description | Example |

|---|---|---|

dynamoTableName | DynamoDB table name for webhook configs | webhook-configs |

APOLLO_URL | Logging endpoint (Apollo service) | https://apollo.example.com/log |

APPID | Apollo authentication appId | webhook-service |

APPKEY | Apollo authentication appKey | secret-key |

PROJECTNAME | Table name for success logs | generic_webhook |

FAILURE_PROJECTNAME | Table name for failure logs | generic_webhook_failure |

REDSHIFTENV | Environment tag for Redshift rows | prod |

AXIOS_TIMEOUT | HTTP request timeout in ms (default: 30000) | 30000 |

APP_IDS_TO_SILENTLY_IGNORE_ERRORS_FOR_RETRY | Comma-separated appIds to skip retry on error | beta-app,test-app |

APP_IDS_TO_IGNORE_SSL_VERLIFICATION | Comma-separated appIds to skip SSL verification (note typo — do not fix) | dev-app-1,staging-app |

Configuration API (ind/generic-webhook)

| Variable | Description | Example |

|---|---|---|

DYNAMODB_TABLE_NAME | DynamoDB table name for webhook configs | webhook-configs |

ALLOWED_IPS | Comma-separated allowed IPs, or * for all | 10.0.0.1,10.0.0.2 or * |

PUBLIC_CERT | RSA public key for JWT (RS256) verification | PEM-encoded public key |

UPDATE_SECRET_DEFAULT_VALUE | Set to yes to auto-generate secret on every new config | yes |

Database migrations (database/)

| Variable | Description | Example |

|---|---|---|

DB_HOST | Redshift cluster endpoint | cluster.abc.region.redshift.amazonaws.com |

DB_PORT | Redshift port (default: 5439) | 5439 |

DB_USER | Redshift username | admin |

DB_PASSWORD | Redshift password | password |

DB_NAME | Redshift database name (default: dev) | dev |